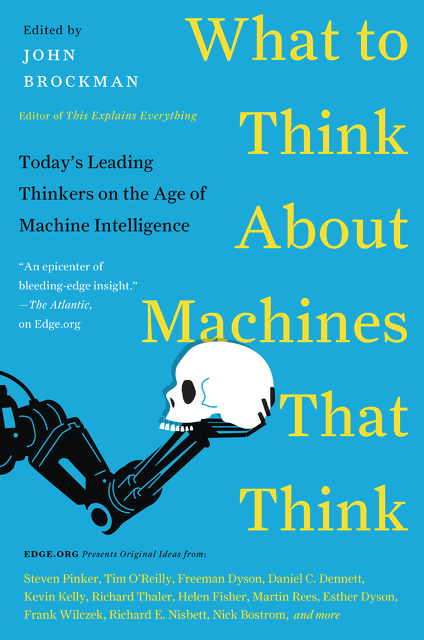

There are tasks, even work, best done by machines who can think, at least in the sense of sorting, matching, and solving certain decision and diagnostic problems beyond the cognitive abilities of most (all?) humans. The algorithms of Amazon, Google, Facebook, et al, build on but surpass the wisdom of crowds in speed and possibly accuracy. With machines that do some of our thinking and some of our work, we may yet approach the Marxian utopia that frees us from the kind of boring and dehumanizing labor that so many contemporary individuals must bear.

But this liberation comes with potential costs. Human welfare is more than the replacement of workers with machines. It also requires attention to how those who lose their jobs are going to support themselves and their children, to how they are going to spend the time they once spent at the workplace. The first issue is potentially resolved by a guaranteed basic income—an answer that begs the question of how we as societies distribute and redistribute our wealth and how we govern ourselves. The second issue is even more complicated. It is certainly not Marx's simplistic notion of fishing in the afternoon and philosophizing over dinner. Humans, not machines, must think hard here about education, leisure, and the kinds of work that machines cannot do well or perhaps at all. Bread and circuses may placate a population, but in that case machines that think may create a society we do not really want—be it dystopian or harmlessly vacuous. Machines depend on design architecture; so do societies. And that is the responsibility of humans, not machines.

There is also the question of what values machines possess and what masters (or mistresses) they serve. Many—albeit not all decisions—presume commitments and values of some kind. These, too, must be introduced and thus are dependent (at least initially) on the values of the humans who create and manage the machines. Drones are designed to attack and to surveil but attack and surveil whom? With the right machines, we can expand literacy and knowledge deeper and wider into the world's population. But who determines the content of what we learn and appropriate as fact? A facile answer is that decentralized competition means we choose what to learn and from which program. Competition is more likely to create than inhibit echo chambers of self-reinforcing beliefs and understandings. The challenge is how to teach humans to have curiosity about competing paradigms and to think in ways that allow them to arbitrate among competing contents.

Machines that think may and should take over tasks they do better than humans. Liberation from unnecessary and dehumanizing toil has long been a human goal and a major impetus to innovation. Supplementing the limited decision-making, diagnostic, and choice skills of individuals are equally worthy goals. However, while AI may reduce the cognitive stress on humans, it does not eliminate human responsibility to ensure that humans improve their capacity to think and make reasonable judgments based on values and empathy. Machines that think create the need for regimes of accountability we have not yet engineered and societal, that is human, responsibility for consequences we have not yet foreseen.