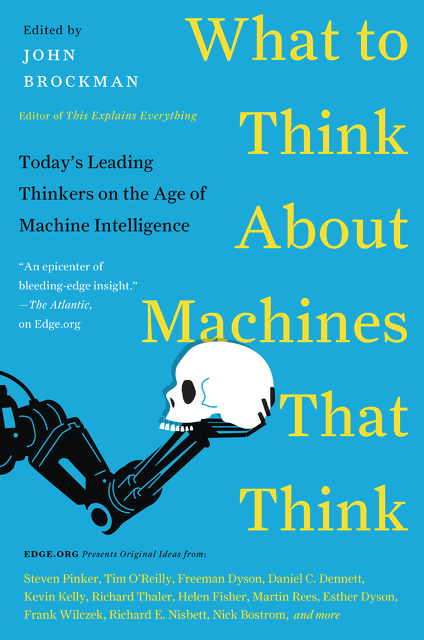

At what point do we say a machine can think? When it can calculate things, when it can understand contextual cues and adjust its behaviour accordingly, when it can both mimic and evoke emotions? I think the answer to the overall question depends on what we mean by thinking. There are plenty of conscious (system two) processes that a machine can do better, more accurately, with less bias than we can. There are already people investing in developing AI machines to replace stock traders—the first time anyone has ever thought about mechanising a white collar job. But a machine cannot think in an automatic (system one) way—we don't fully understand the automatic processes that drive the way we behave and "think" so we cannot programme a machine to behave as humans do

The key question then is—if a machine can think in a system two way at the speed of a human's system one then in some ways isn't their "thinking" superior to ours?

Well, context surely matters. For some things yes; others no. Machines won't be myopic; they could clean things up for us environmentally; they wouldn't be stereotypical or judgmental and could really get at addressing misery; they could help us overcome affective forecasting; and so on. But on the other hand, we might still not like a computer. What if a poet and a machine could produce the exact same poem—the effect on another human being is almost certainly less if the poem is computer generated and the reader knows this (knowledge of the author colours the lens through which the poem is read and interpreted).