Events: Seminars

"To arrive at the edge of the world's knowledge, seek out the most complex and sophisticated minds, put them in a room together, and have them ask each other the questions they are asking themselves."

HEADCON '14

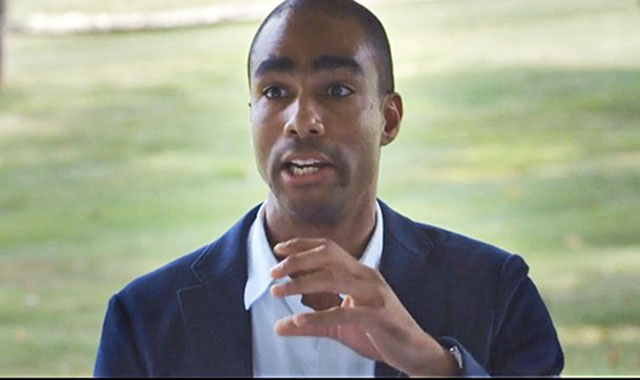

In September a group of social scientists gathered for HEADCON '14, an Edge Conference at Eastover Farm. Speakers addressed a range of topics concerning the social (or moral, or emotional) brain: Sarah-Jayne Blakemore: "The Teenager's Sense Of Social Self"; Lawrence Ian Reed: "The Face Of Emotion"; Molly Crockett: "The Neuroscience of Moral Decision Making"; Hugo Mercier: "Toward The Seamless Integration Of The Sciences"; Jennifer Jacquet: "Shaming At Scale"; Simone Schnall: "Moral Intuitions, Replication, and the Scientific Study of Human Nature"; David Rand: "How Do You Change People's Minds About What Is Right And Wrong?"; L.A. Paul: "The Transformative Experience"; Michael McCullough: "Two Cheers For Falsification". Also participating as "kibitzers" were four speakers from HEADCON '13, the previous year's event: Fiery Cushman, Joshua Knobe, David Pizarro, and Laurie Santos.

We are now pleased to present the program in its entirety, nearly six hours of Edge Video and a downloadable PDF of the 55,000-word transcript.

[6 hours]

John Brockman, Editor

Russell Weinberger, Associate Publisher

Copyright (c) 2014 by Edge Foundation, Inc. All Rights Reserved. Please feel free to use for personal, noncommercial use (only).

_____

Related on Edge:

HeadCon '13

Edge Meetings & Seminars

Edge Master Classes

CONTENTS

Sarah-Jayne Blakemore: "The Teenager's Sense Of Social Self"

The reason why that letter is nice is because it illustrates what's important to that girl at that particular moment in her life. Less important that man landed on moon than things like what she was wearing, what clothes she was into, who she liked, who she didn't like. This is the period of life where that sense of self, and particularly sense of social self, undergoes profound transition. Just think back to when you were a teenager. It's not that before then you don't have a sense of self, of course you do. A sense of self develops very early. What happens during the teenage years is that your sense of who you are—your moral beliefs, your political beliefs, what music you're into, fashion, what social group you're into—that's what undergoes profound change.

[36.22]

SARAH-JAYNE BLAKEMORE is a Royal Society University Research Fellow and Professor of Cognitive Neuroscience, Institute of Cognitive Neuroscience, University College London. Sarah-Jayne Blakemore's Edge Bio Page

Lawrence Ian Reed: "The Face Of Emotion"

What can we tell from the face? There’s some mixed data, but data out that there’s a pretty strong coherence between what is felt and what’s expressed on the face. Happiness, sadness, disgust, contempt, fear, anger, all have prototypic or characteristic facial expressions. In addition to that, you can tell whether two emotions are blended together. You can tell the difference between surprise and happiness, and surprise and anger, or surprise and sadness. You can also tell the strength of an emotion. There seems to be a relationship between the strength of the emotion and the strength of the contraction of the associated facial muscles.

LAWRENCE IAN REED is a Visiting Assistant Professor of Psychology, Skidmore College. Lawrence Ian Reed's Edge Bio Page

Molly Crockett: "The Neuroscience of Moral Decision Making"

Imagine we could develop a precise drug that amplifies people’s aversion to harming others; you won’t hurt a fly, everyone becomes Buddhist monks or something. Who should take this drug? Only convicted criminals—people who have committed violent crimes? Should we put it in the water supply? These are normative questions. These are questions about what should be done. I feel grossly unprepared to answer these questions with the training that I have, but these are important conversations to have between disciplines. Psychologists and neuroscientists need to be talking to philosophers about this and these are conversations that we need to have because we don’t want to get to the point where we have the technology and then we haven’t had this conversation because then terrible things could happen.

MOLLY CROCKETT is Associate Professor, Department of Experimental Psychology, University of Oxford; Wellcome Trust Postdoctoral Fellow, Wellcome Trust Centre for Neuroimaging. Molly Crockett's Edge Bio Page

Hugo Mercier: "Toward The Seamless Integration Of The Sciences"

One of the great things about cognitive science is that it allowed us to continue that seamless integration of the sciences, from physics, to chemistry, to biology, and then to the mind sciences, and it's been quite successful at doing this in a relatively short time. But on the whole, I feel there's still a failure to continue this thing towards some of the social sciences such as, anthropology, to some extent, and sociology or history that still remain very much shut off from what some would see as progress, and as further integration.

HUGO MERCIER, a Cognitive Scientist, is an Ambizione Fellow at the Cognitive Science Center at the University of Neuchâtel. Hugo Mercier's Edge Bio Page

Jennifer Jacquet: "Shaming At Scale"

Shaming, in this case, was a fairly low-cost form of punishment that had high reputational impact on the U.S. government, and led to a change in behavior. It worked at scale—one group of people using it against another group of people at the group level. This is the kind of scale that interests me. And the other thing that it points to, which is interesting, is the question of when shaming works. In part, it's when there's an absence of any other option. Shaming is a little bit like antibiotics. We can overuse it and actually dilute its effectiveness, because it's linked to attention, and attention is finite. With punishment, in general, using it sparingly is best. But in the international arena, and in cases in which there is no other option, there is no formalized institution, or no formal legislation, shaming might be the only tool that we have, and that's why it interests me.

JENNIFER JACQUET is Assistant Professor of Environmental Studies, NYU; Researching cooperation and the tragedy of the commons; Author, Is Shame Necessary? Jennifer Jacquet's Edge Bio Page

Simone Schnall: "Moral Intuitions, Replication, and the Scientific Study of Human Nature"

In the end, it's about admissible evidence and ultimately, we need to hold all scientific evidence to the same high standard. Right now we're using a lower standard for the replications involving negative findings when in fact this standard needs to be higher. To establish the absence of an effect is much more difficult than the presence of an effect.

SIMONE SCHNALL is a University Senior Lecturer and Director of the Cambridge Embodied Cognition and Emotion Laboratory at Cambridge University. Simone Schnall's Edge Bio Page

David Rand: "How Do You Change People's Minds About What Is Right And Wrong?"

What all these different things boil down to is the idea that there are future consequences for your current behavior. You can't just do whatever you want because if you are selfish now, it'll come back to bite you. I should say that there are lots of theoretical models, math models, computational models, lab experiments, and also real world field data from field experiments showing the power of these reputation observability effects for getting people to cooperate.

DAVID RAND is Assistant Professor of Psychology, Economics, and Management at Yale University, and the Director of Yale University's Human Cooperation Laboratory. David Rand's Edge Bio page

L.A. Paul: "The Transformative Experience"

We're going to pretend that modern-day vampires don't drink the blood of humans; they're vegetarian vampires, which means they only drink the blood of humanely-farmed animals. You have a one-time-only chance to become a modern-day vampire. You think, "This is a pretty amazing opportunity, but do I want to gain immortality, amazing speed, strength, and power? Do I want to become undead, become an immortal monster and have to drink blood? It's a tough call." Then you go around asking people for their advice and you discover that all of your friends and family members have already become vampires. They tell you, "It is amazing. It is the best thing ever. It's absolutely fabulous. It's incredible. You get these new sensory capacities. You should definitely become a vampire." Then you say, " Can you tell me a little more about it?" And they say, "You have to become a vampire to know what it's like. You can't, as a mere human, understand what it's like to become a vampire just by hearing me talk about it. Until you're a vampire, you're just not going to know what it's going to be like."

L.A. PAUL is Professor of Philosophy at the University of North Carolina at Chapel Hill, and Professorial Fellow in the Arché Research Centre at the University of St. Andrews. L.A. Paul's Edge Bio page

Michael McCullough: "Two Cheers For Falsification"

What I want to do today is raise one cheer for falsification, maybe two cheers for falsification. Maybe it’s not philosophical falsificationism I’m calling for, but maybe something more like methodological falsificationism. It has an important role to play in theory development that maybe we have turned our backs on in some areas of this racket we’re in, particularly the part of it that I do—Ev Psych—more than we should have.

MICHAEL MCCULLOUGH is Director, Evolution and Human Behavior Laboratory, Professor of Psychology, Cooper Fellow, University of Miami; Author, Beyond Revenge. Michael McCullough's Edge Bio page

Also Participating

FIERY CUSHMAN is Assistant Professor, Department of Psychology, Harvard University. JOSHUA KNOBE is an Experimental Philosopher; Associate Professor of Philosophy and Cognitive Science, Yale University. DAVID PIZARRO is Associate Professor of Psychology, Cornell University, specializing in moral judgment. LAURIE SANTOS is Associate Professor, Department of Psychology; Director, Comparative Cognition Laboratory, Yale University.

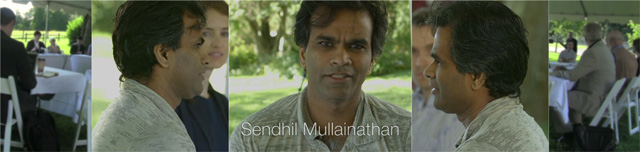

In July, 2013, Edge invited a group of social scientists to participate in an Edge Seminar at Eastover Farm focusing on the state of the art of what the social sciences have to tell us about human nature. The ten speakers were Sendhil Mullainathan, June Gruber, Fiery Cushman, Rob Kurzban, Nicholas Christakis, Joshua Greene, Laurie Santos, Joshua Knobe, David Pizarro, Daniel C. Dennett. Also participating were Daniel Kahneman, Anne Treisman, Jennifer Jacquet.

We asked the participants to consider the following questions:

"What's new in your field of social science in the last year or two, and why should we care?" "Why do we want or need to know about it?" "How does it change our view of human nature?"

And in so doing we also asked them to focus broadly and address the major developments in their field (including but not limited to their own research agenda). The goal: to get new, fresh, and original up-to-date field reports on different areas of social science.

What Big Data Means For Social Science (Sendhil Mullainathan) | The Scientific Study of Positive Emotion (June Gruber) | The Paradox of Automatic Planning (Fiery Cushman) | P-Hacking and the Replication Crisis (Rob Kurzban) | The Science of Social Connections (Nicholas Christakis) | The Role of Brain Imaging in Social Science (Joshua Greene) | What Makes Humans Unique (Laurie Santos) | Experimental Philosophy and the Notion of the Self (Joshua Knobe) | The Failure of Social and Moral Intuitions (David Pizarro) | The De-Darwinizing of Cultural Change (Daniel C. Dennett)

HeadCon '13: WHAT'S NEW IN SOCIAL SCIENCE was also an experiment in online video designed to capture the dynamic of an Edge seminar, focusing on the interaction of ideas, and of people. The documentary film-maker Jason Wishnow, the pioneer of "TED Talks" during his tenure as director of film and video at TED (2006-2012), helped us develop this new iteration of Edge Video, filming the ten sessions in split-screen with five cameras, presenting each speaker and the surrounding participants from multiple simultaneous camera perspectives.

We are now pleased to present the program in its entirety, nearly six hours of Edge Video and a downloadable PDF of the 58,000-word transcript.

The great biologist Ernst Mayr (the "Darwin of the 20th Century") once said to me: "Edge is a conversation." And like any conversation, it is evolving. And what a conversation it is!

(6 hours of video; 58,000 words)

John Brockman, Editor

Russell Weinberger, Associate Publisher

Download PDF of Manuscript | Continue to Video and Online Text

Sendhil Mullainathan: What Big Data Means For Social Science (Part I)

We've known big data has had big impacts in business, and in lots of prediction tasks. I want to understand, what does big data mean for what we do for science? Specifically, I want to think about the following context: You have a scientist who has a hypothesis that they would like to test, and I want to think about how the testing of that hypothesis might change as data gets bigger and bigger. So that's going to be the rule of the game. Scientists start with a hypothesis and they want to test it; what's going to happen?

June Gruber: The Scientific Study of Positive Emotion (Part II)

What I'm really interested in is the science of human emotion. In particular, what's captivated my field and my interest the most is trying to understand positive emotions. Not only the ways in which perhaps we think they're beneficial for us or confer some sort of adaptive value, but actually the ways in which they may signal dysfunction and may not actually, in all circumstances and in all intensities, be good for us.

June Gruber is Assistant Professor of Psychology, Director, Positive Emotion & Psychopatology Lab, Yale University.

Fiery Cushman: The Paradox of Automatic Planning (Part III)

I want to tell you about a problem that I have because it highlights a deep problem for the field of psychology. The problem is that every time I sit down to try to write a manuscript I end up eating Ben and Jerry's instead. I sit down and then a voice comes into my head and it says, "How about Ben and Jerry's? You deserve it. You've been working hard for almost ten minutes now." Before I know it, I'm on the way out the door.

Fiery Cushman is Assistant Professor, Cognitive, Linguistic, Social Science, Brown University.

Rob Kurzban: P-Hacking and the Replication Crisis (Part IV)

The first three talks this morning I think have been optimistic. We've heard about the promise of big data, we've heard about advances in emotions, and we've just heard from Fiery, who very cleverly managed to find a way to leave before I gave my remarks about how we're understanding something deep about human nature. I think there's a risk that my remarks are going to be understood as pessimistic but they're really not. My optimism is embodied in the notion that what we're doing here is important and we can do it better.

Rob Kurzban is an Associate Professor, University of Pennsylvania specializing in evolutionary psychology: Author, Why Everyone (Else) Is A Hypocrite.

Nicholas Christakis: The Science of Social Connections (Part V)

If you think about it, humans are extremely unusual as a species in that we form long-term, non-reproductive unions to other members of our species, namely, we have friends. Why do we do this? Why do we have friends? It's not hard to construct an argument as to why we would have sex with other people but it's rather more difficult to construct an argument as to why we would befriend other people. Yet we and very few other species do this thing. So I'd like to problematize that, I'd like to problematize friendship first.

Nicholas Christakis is a Physician and Social Scientist; Director, The Human Nature Lab, Yale University; Coauthor, Connected: The Surprising Power Of Our Social Networks And How They Shape Our Lives.

Joshua Greene: The Role of Brain Imaging in Social Science (Part VI)

We're here in early September 2013 and the topic that's on everybody's minds, (not just here but everywhere) is Syria. Will the U.S. bomb Syria? Should the U.S. bomb Syria? Why do some people think that the U.S. should? Why do other people think that the U.S. shouldn't? These are the kinds of questions that occupy us every day. This is a big national and global issue, sometimes it's personal issues, and these are the kinds of questions that social science tries to answer.

Joshua Greene is John and Ruth Hazel Associate Professor of the Social Sciences and the director of the Moral Cognition Laboratory in the Department of Psychology, Harvard University. Author, Moral Tribes: Emotion, Reason, And The Gap Between Us And Them.

Laurie Santos: What Makes Humans Unique (Part VII)

The findings in comparative cognition I'm going to talk about are often different than the ones you hear comparative cognitive researchers typically talking about. Usually when somebody up here is talking about how animals are redefining human nature, it's cases where we're seeing animals being really similar to humans—elephants who do mirror self-recognition; rodents who have empathy; capuchin monkeys who obey prospect theory—all these cases where we see animals doing something really similar.

Laurie Santos is Associate Professor, Department of Psychology; Director, Comparative Cognition Laboratory, Yale University.

Joshua Knobe: Experimental Philosophy and the Notion of the Self (Part VIII)

What is the field of experimental philosophy? Experimental philosophy is a relatively new field—one that just cropped up around the past ten years or so, and it's an interdisciplinary field, uniting ideas from philosophy and psychology. In particular, what experimental philosophers tend to do is to go after questions that are traditionally associated with philosophy but to go after them using the methods that have been traditionally associated with psychology.

Joshua Knobe is an Experimental Philosopher; Associate Professor of Philosophy and Cognitive Science, Yale University.

We had people interact—strangers interact in the lab—and we filmed them, and we got the cues that seemed to indicate that somebody's going to be either more cooperative or less cooperative. But the fun part of this study was that for the second part we got those cues and we programmed a robot—Nexi the robot, from the lab of Cynthia Breazeal at MIT—to emulate, in one condition, those non-verbal gestures. So what I'm talking about today is not about the results of that study, but rather what was interesting about looking at people interacting with the robot.

David Pizarro is Associate Professor of Psychology, Cornell University, specializing in moral judgement.

Daniel C. Dennett: The De-Darwinizing of Cultural Change (Part X)

Think for a moment about a termite colony or an ant colony—amazingly competent in many ways, we can do all sorts of things, treat the whole entity as a sort of cognitive agent and it accomplishes all sorts of quite impressive behavior. But if I ask you, "What is it like to be a termite colony?" most people would say, "It's not like anything." Well, now let's look at a brain, let's look at a human brain—100 billion neurons, roughly speaking, and each one of them is dumber than a termite and they're all sort of semi-independent. If you stop and think about it, they're all direct descendants of free-swimming unicellular organisms that fended for themselves for a billion years on their own. There's a lot of competence, a lot of can-do in their background, in their ancestry. Now they're trapped in the skull and they may well have agendas of their own; they have competences of their own, no two are alike. Now the question is, how is a brain inside a head any more integrated, any more capable of there being something that it's like to be that than a termite colony? What can we do with our brains that the termite colony couldn't do or maybe that many animals couldn't do?

Daniel C. Dennett is a Philosopher; Austin B. Fletcher Professor of Philosophy, Co-Director, Center for Cognitive Studies, Tufts University; Author, Intuition Pumps.

ALSO PARTICIPATING

Daniel Kahneman is Recipient, Nobel Prize in Economics, 2002; Presidential Medal of Freedom, 2013; Eugene Higgins Professor of Psychology, Princeton University; Author, Thinking Fast And Slow. Anne Treisman is Professor Emeritus of Psychology, Princeton University; Recipient, National Medal of Science, 2013.

Jennifer Jacquet is Clinical Assistant Professor of Environmental Studies, NYU; Researching cooperation and the tragedy of the commons.

Out-take from the trailer I made for the 1968 movie "Head" (Columbia Pictures; Directed by Bob Rafelson; Written by Jack Nicholson)

|

|

|

|

|

|

|

|

Something radically new is in the air: new ways of understanding physical systems, new ways of thinking about thinking that call into question many of our basic assumptions. A realistic biology of the mind, advances in evolutionary biology, physics, information technology, genetics, neurobiology, psychology, engineering, the chemistry of materials: all are questions of critical importance with respect to what it means to be human. For the first time, we have the tools and the will to undertake the scientific study of human nature.

This began in the early seventies, when, as a graduate student at Harvard, evolutionary biologist Robert Trivers wrote five papers that set forth an agenda for a new field: the scientific study of human nature. In the past thirty-five years this work has spawned thousands of scientific experiments, new and important evidence, and exciting new ideas about who and what we are presented in books by scientists such as Richard Dawkins, Daniel C. Dennett, Steven Pinker, and Edward O. Wilson among many others.

In 1975, Wilson, a colleague of Trivers at Harvard, predicted that ethics would someday be taken out of the hands of philosophers and incorporated into the "new synthesis" of evolutionary and biological thinking. He was right.

Scientists engaged in the scientific study of human nature are gaining sway over the scientists and others in disciplines that rely on studying social actions and human cultures independent from their biological foundation.

No where is this more apparent than in the field of moral psychology. Using babies, psychopaths, chimpanzees, fMRI scanners, web surveys, agent-based modeling, and ultimatum games, moral psychology has become a major convergence zone for research in the behavioral sciences.

So what do we have to say? Are we moving toward consensus on some points? What are the most pressing questions for the next five years? And what do we have to offer a world in which so many global and national crises are caused or exacerbated by moral failures and moral conflicts? It seems like everyone is studying morality these days, reaching findings that complement each other more often than they clash.

Culture is humankind’s biological strategy, according to Roy F. Baumeister, and so human nature was shaped by an evolutionary process that selected in favor of traits conducive to this new, advanced kind of social life (culture). To him, therefore, studies of brain processes will augment rather than replace other approaches to studying human behavior, and he fears that the widespread neglect of the interpersonal dimension will compromise our understanding of human nature. Morality is ultimately a system of rules that enables groups of people to live together in reasonable harmony. Among other things, culture seeks to replace aggression with morals and laws as the primary means to solve the conflicts that inevitably arise in social life. Baumeister’s work has explored such morally relevant topics as evil, self-control, choice, and free will. [More]

According to Yale psychologist Paul Bloom, humans are born with a hard-wired morality. A deep sense of good and evil is bred in the bone. His research shows that babies and toddlers can judge the goodness and badness of others' actions; they want to reward the good and punish the bad; they act to help those in distress; they feel guilt, shame, pride, and righteous anger. [More]

Harvard cognitive neuroscientist and philosopher Joshua D. Greene sees our biggest social problems — war, terrorism, the destruction of the environment, etc. — arising from our unwitting tendency to apply paleolithic moral thinking (also known as "common sense") to the complex problems of modern life. Our brains trick us into thinking that we have Moral Truth on our side when in fact we don't, and blind us to important truths that our brains were not designed to appreciate. [More]

University of Virginia psychologist Jonathan Haidt's research indicates that morality is a social construction which has evolved out of raw materials provided by five (or more) innate "psychological" foundations: Harm, Fairness, Ingroup, Authority, and Purity. Highly educated liberals generally rely upon and endorse only the first two foundations, whereas people who are more conservative, more religious, or of lower social class usually rely upon and endorse all five foundations. [More]

The failure of science to address questions of meaning, morality, and values, notes neuroscientist Sam Harris, has become the primary justification for religious faith. In doubting our ability to address questions of meaning and morality through rational argument and scientific inquiry, we offer a mandate to religious dogmatism, superstition, and sectarian conflict. The greater the doubt, the greater the impetus to nurture divisive delusions. [More]

A lot of Yale experimental philosopher Joshua Knobe's recent research has been concerned with the impact of people's moral judgments on their intuitions about questions that might initially appear to be entirely independent of morality (questions about intention, causation, etc.). It has often been suggested that people's basic approach to thinking about such questions is best understood as being something like a scientific theory. He has offered a somewhat different view, according to which people's ordinary way of understanding the world is actually infused through and through with moral considerations. He is arguably most widely known for what has come to be called "the Knobe effect" or the "Side-Effect Effect." [More]

NYU psychologist Elizabeth Phelps investigates the brain activity underlying memory and emotion. Much of Phelps' research has focused on the phenomenon of "learned fear," a tendency of animals to fear situations associated with frightening events. Her primary focus has been to understand how human learning and memory are changed by emotion and to investigate the neural systems mediating their interactions. A recent study published in Nature by Phelps and her colleagues, shows how fearful memories can be wiped out for at least a year using a drug-free technique that exploits the way that human brains store and recall memories. [More]

Disgust has been keeping Cornell psychologist David Pizarro particularly busy, as it has been implicated by many as an emotion that plays a large role in many moral judgments. His lab results have shown that an increased tendency to experience disgust (as measured using the Disgust Sensitivity Scale, developed by Jon Haidt and colleagues), is related to political orientation. [More]

Each of the above participants led a 45-minute session on Day One that consisted of a 25-minute talk. Day Two consisted of two 90-minute open discussions on "The Science of Morality", intended as a starting point to begin work on a consensus document on the state of moral psychology to be published onEdge in the near future.

Among the members of the press in attendance were: Sharon Begley, Newsweek, Drake Bennett, Ideas, Boston Globe, David Brooks, OpEd Columnist, New York Times, Daniel Engber, Slate, Amanda Gefter, Opinion Editor,New Scientist, Jordan Mejias, Frankfurter Allgemeine Zeitung,Gary Stix, Scientific American, Pamela Weintraub, Discover Magazine.

The New Science of Morality, Part 1

[JONATHAN HAIDT:] As the first speaker, I'd like to thank the Edge Foundation for bringing us all together, and bringing us all together in this beautiful place. I'm looking forward to having these conversations with all of you.

I was recently at a conference on moral development, and a prominent Kohlbergian moral psychologist stood up and said, "Moral psychology is dying." And I thought, well, maybe in your neighborhood property values are plummeting, but in the rest of the city, we are going through a renaissance. We are in a golden age.

The New Science of Morality, Part 2

[JOSHUA D. GREENE:] Now, it's true that, as scientists, our basic job is to describe the world as it is. But I don't think that that's the only thing that matters. In fact, I think the reason why we're here, the reason why we think this is such an exciting topic, is not that we think that the new moral psychology is going to cure cancer. Rather, we think that understanding this aspect of human nature is going to perhaps change the way we think and change the way we respond to important problems and issues in the real world. If all we were going to do is just describe how people think and never do anything with it, never use our knowledge to change the way we relate to our problems, then I don't think there would be much of a payoff. I think that applying our scientific knowledge to real problems is the payoff.

The New Science of Morality, Part 3

[SAM HARRIS:] ...I think we should differentiate three projects that seem to me to be easily conflated, but which are distinct and independently worthy endeavors. The first project is to understand what people do in the name of "morality." We can look at the world, witnessing all of the diverse behaviors, rules, cultural artifacts, and morally salient emotions like empathy and disgust, and we can study how these things play out in human communities, both in our time and throughout history. We can examine all these phenomena in as nonjudgmental a way as possible and seek to understand them. We can understand them in evolutionary terms, and we can understand them in psychological and neurobiological terms, as they arise in the present. And we can call the resulting data and the entire effort a "science of morality". This would be a purely descriptive science of the sort that I hear Jonathan Haidt advocating.

The New Science of Morality, Part 4

[ROY BAUMEISTER:] And so that said, in terms of trying to understand human nature, well, and morality too, nature and culture certainly combine in some ways to do this, and I'd put these together in a slightly different way, it's not nature's over here and culture's over there and they're both pulling us in different directions. Rather, nature made us for culture. I'm convinced that the distinctively human aspects of psychology, the human aspects of evolution were adaptations to enable us to have this new and better kind of social life, namely culture.

Culture is our biological strategy. It's a new and better way of relating to each other, based on shared information and division of labor, interlocking roles and things like that. And it's worked. It's how we solve the problems of survival and reproduction, and it's worked pretty well for us in that regard. And so the distinctively human traits are ones often there to make this new kind of social life work.

Now, where does this leave us with morality?

The New Science of Morality, Part 5

[PAUL BLOOM:] What I want to do today is talk about some ideas I've been exploring concerning the origin of human kindness. And I'll begin with a story that Sarah Hrdy tells at the beginning of her excellent new book, "Mothers And Others." She describes herself flying on an airplane. It’s a crowded airplane, and she's flying coach. She's waits in line to get to her seat; later in the flight, food is going around, but she's not the first person to be served; other people are getting their meals ahead of her. And there's a crying baby. The mother's soothing the baby, the person next to them is trying to hide his annoyance, other people are coo-cooing the baby, and so on.

As Hrdy points out, this is entirely unexceptional. Billions of people fly each year, and this is how most flights are. But she then imagines what would happen if every individual on the plane was transformed into a chimp. Chaos would reign. By the time the plane landed, there'd be body parts all over the aisles, and the baby would be lucky to make it out alive.

The point here is that people are nicer than chimps.

The New Science of Morality, Part 6

[DAVID PIZARRO:] What I want to talk about is piggybacking off of the end of Paul's talk, where he started to speak a little bit about the debate that we've had in moral psychology and in philosophy, on the role of reason and emotion in moral judgment. I'm going to keep my claim simple, but I want to argue against a view that probably nobody here has, (because we're all very sophisticated), but it's often spoken of emotion and reason as being at odds with each other — in a sense that to the extent that emotion is active, reason is not active, and to the extent that reason is active, emotion is not active. (By emotion here, I mean, broadly speaking, affective influences).

I think that this view is mistaken (although it is certainly the case sometimes). The interaction between these two is much more interesting. So I'm going to talk a bit about some studies that we've done. Some of them have been published, and a couple of them haven't (because they're probably too inappropriate to publish anywhere, but not too inappropriate to speak to this audience). They are on the role of emotive forces in shaping our moral judgment. I use the term "emotive," because they are about motivation and how motivation affects the reasoning process when it comes to moral judgment.

The New Science of Morality, Part 7

[ELIZABETH PHELPS:] In spite of these beliefs I do think about decisions as reasoned or instinctual when I'm thinking about them for myself. And this has obviously been a very powerful way of thinking about how we do things because it goes back to earliest written thoughts. We have reason, we have emotion, and these two things can compete. And some are unique to humans and others are shared with other species.

And economists, when thinking about decisions, have also adopted what we call a dual system approach. This is obviously a different dual system approach and here I'm focusing mostly on Kahneman's System 1 and System 2. As probably everybody in this room knows Kahneman and Tversky showed that there were a number of ways in which we make decisions that didn't seem to be completely consistent with classical economic theory and easy to explain. And they proposed Prospect Theory and suggested that we actually have two systems we use when making decisions, one of which we call reason, one of which we call intuition.

Kahneman didn't say emotion. He didn't equate emotion with intuition.

The New Science of Morality, Part 8

[JOSHUA KNOBE:] ...what's really exciting about this new work is not so much just the very idea of philosophers doing experiments but rather the particular things that these people ended up showing. When these people went out and started doing these experimental studies, they didn't end up finding results that conformed to the traditional picture. They didn't find that there was a kind of initial stage in which people just figured out, on a factual level, what was going on in a situation, followed by a subsequent stage in which they used that information in order to make a moral judgment. Rather they really seemed to be finding exactly the opposite.

What they seemed to be finding is that people's moral judgments were influencing the process from the very beginning, so that people's whole way of making sense of their world seemed to be suffused through and through with moral considerations. In this sense, our ordinary way of making sense of the world really seems to look very, very deeply different from a kind of scientific perspective on the world. It seems to be value-laden in this really fundamental sense.

EDGE IN THE NEWS

BOSTON GLOBE

August 15, 2010

IDEAS

The surprising moral force of disgust

By Drake Bennett

...Psychologists like Haidt are leading a wave of research into the so-called moral emotions — not just disgust, but others like anger and compassion — and the role those feelings play in how we form moral codes and apply them in our daily lives. A few, like Haidt, go so far as to claim that all the world's moral systems can best be characterized not by what their adherents believe, but what emotions they rely on.

There is deep skepticism in parts of the psychology world about claims like these. And even within the movement there is a lively debate over how much power moral reasoning has — whether our behavior is driven by thinking and reasoning, or whether thinking and reasoning are nothing more than ornate rationalizations of what our emotions ineluctably drive us to do. Some argue that morality is simply how human beings and societies explain the peculiar tendencies and biases that evolved to help our ancestors survive in a world very different from ours.

A few of the leading researchers in the new field met late last month at a small conference in western Connecticut, hosted by the Edge Foundation, to present their work and discuss the implications. Among the points they debated was whether their work should be seen as merely descriptive, or whether it should also be a tool for evaluating religions and moral systems and deciding which were more and less legitimate — an idea that would be deeply offensive to religious believers around the world.

But even doing the research in the first place is a radical step. The agnosticism central to scientific inquiry is part of what feels so dangerous to philosophers and theologians. By telling a story in which morality grows out of the vagaries of human evolution, the new moral psychologists threaten the claim of universality on which most moral systems depend — the idea that certain things are simply right, others simply wrong. If the evolutionary story about the moral emotions is correct, then human beings, by being a less social species or even having a significantly different prehistoric diet, might have ended up today with an entirely different set of religions and ethical codes. Or we might never have evolved the concept of morals at all. ...

THE ATLANTIC

July 29, 2010

Alexis Madrigal

University of Virginia moral psychologist Jonathan Haidt delivered an absolutely dynamite talk on new advances in his field last week. The video and a transcript have been posted by Edge.org, a loose consortium of very smart people run by John Brockman. Haidt whips us through centuries of moral thought, recent evolutionary psychology, and discloses which two papers every single psychology student should have to read. Through it all, he's funny, erudite, and understandable. Here, we excerpt a few paragraphs from his conclusion, in which Haidt tells us how to think about our moral minds: ...

FRANKFURTER ALLGEMEINE ZEITUNG

July 28, 2010

FEUILLETON

Moral reasoning

SOLEMN HIGH MASS IN THE TEMPLE OF REASON

How do you train a moral muscle? American researchers take their first steps on the path to a science of morality without God hypothesis. The last word should have the reason.

By Jordan Mejias

[Google translation:]

28th July 2010 One was missing and had he turned up, the illustrious company would have had nothing more to discuss and think. Even John Brockman, literary agent, and guru of the third culture, it could not move, stop by in his salon, which he every summer from the virtuality of the Internet, click on edge.org moved, in a New England idyl. There, in the green countryside of Washington, Connecticut, it was time to morality as a new science. When new it was announced, because their devoted not philosophers and theologians, but psychologists, biologists, neurologists, and at most such philosophers, based on experiments and the insights of brain research. They all had to admit, even to be on the search, but they missed not one who lacked the authority in matters of morality: God.

The secular science dominated the conference. As it should come to an end, however, a consensus first, were the conclusions apart properly. Even on the question of whether religion should be regarded as part of evolution, remained out of the clear answer. Agreement, the participants were at least that is to renounce God. Him, the unanimous result of her certainly has not been completed or not may be locked investigations, did not owe the man morality. That it is innate in him, but did so categorically not allege any. Only on the findings that morality is a natural phenomenon, there was agreement, even if only to a certain degree. For, should be understood not only the surely. Besides nature makes itself in morality and the culture just noticeable, and where the effect of one ends and the other begins, is anything but settled.

Better be nice

In a baby science, as Elizabeth Phelps, a neuroscientist at New York University, called the moral psychology may by way of surprise not much groping. How about some with free will, will still remain for the foreseeable future a mystery. Moral instincts was, after all, with some certainty Roy Baumeister, a social psychologist at Florida State University, are not built into us. We are only given the ability to acquire systems of morality. Gives us to be altruistic, we are selfish by nature, benefits. It is moral to be compared with a muscle, the fatigue, but can also be strengthened through regular training. What sounds easier than is done, if not clear what is to train as well. A moral center that we can selectively edit points, our brain does not occur.

But amazingly, with all that we are nice to each other are forced reproduction, and Paul Bloom, a psychologist at Yale, is noticed. Obviously, we have realized that our lives more comfortable when others do not fight us. Factors of Nettigkeitswachstums Bloom also recognizes in capitalism that will work better with nice people, and world religions, which act in large groups and their dynamics as it used to strangers to meet each other favorably. The fact that we have developed over the millennia morally beneficial, holds not only he has been proved. Even the neurologist Sam Harris, author of "The Moral Landscape. How Science Can Determine Human Values "(Free Press), wants to make this progress not immoral monsters like Hitler and Stalin spoil. ...

[...Continue: German language original | Google translation]

ANDREW SULLIVAN — THE DAILY DISH

25 JUL 2010

Edge held a seminar on morality. Here's Joshua Knobe:

Over the past few years, a series of recent experimental studies have reexamined the ways in which people answer seemingly ordinary questions about human behavior. Did this person act intentionally? What did her actions cause? Did she make people happy or unhappy? It had long been assumed that people's answers to these questions somehow preceded all moral thinking, but the latest research has been moving in a radically different direction. It is beginning to appear that people's whole way of making sense of the world might be suffused with moral judgment, so that people's moral beliefs can actually transform their most basic understanding of what is happening in a situation.

David Brooks' illuminating column on this topic covered the same ground:

...

...Advantage Locke over Hobbes.

THE NEW YORK TIMES

July 23, 2010

OP-ED COLUMNIST

Scientific research is showing that we are born with an innate moral sense.

By DAVID BROOKS

Washington, Conn.

Where does our sense of right and wrong come from? Most people think it is a gift from God, who revealed His laws and elevates us with His love. A smaller number think that we figure the rules out for ourselves, using our capacity to reason and choosing a philosophical system to live by.

Moral naturalists, on the other hand, believe that we have moral sentiments that have merged from a long history of relationships. To learn about morality, you don't rely upon revelation or metaphysics; you observe people as they live.

This week a group of moral naturalists gathered in Connecticut at a conference organized by the Edge Foundation. ...

By the time humans came around, evolution had forged a pretty firm foundation for a moral sense. Jonathan Haidt of the University of Virginia argues that this moral sense is like our sense of taste. We have natural receptors that help us pick up sweetness and saltiness. In the same way, we have natural receptors that help us recognize fairness and cruelty. Just as a few universal tastes can grow into many different cuisines, a few moral senses can grow into many different moral cultures.

Paul Bloom of Yale noted that this moral sense can be observed early in life. Bloom and his colleagues conducted an experiment in which they showed babies a scene featuring one figure struggling to climb a hill, another figure trying to help it, and a third trying to hinder it. ...

THE REALITY CLUB

QUESTIONS FOR "THE MORAL NINE" FROM THE EDGE COMMUNITY

Howard Gardner, Geoffrey Miller, Brian Eno, James Fowler, Rebecca Mackinnon, Jaron Lanier, Eva Wisten, Brian Knutson, Andrian Kreye, Anonymous, Alison Gopnik, Robert Trivers, Randoph Nesse, M.D.

HOWARD GARDNER

Psychologist, Harvard University; Author, Changing Minds

Enlightenment ideas were the product of white male Christians living in the 18th century. They form the basis of the Universal Declaration of Human Rights and other Western-inflected documents. But in our global world, Confucian societies and Islamic societies have their own guidelines about progress, individuality, democratic processes, human obligations. In numbers they represent more of humanity and are likely to become even more numerous in this century. What do the human sciences have to contribute to an understanding of these 'multiple voices' ? Can they combined harmoniously or are there unbridgeable gaps?

GEOFFREY MILLER

Evolutionary Psychologist, University of New Mexico; Author, Spent: Sex, Evolution, and Consumer Behavior

1) Many people become vegans, protect animal rights, and care about the long-term future of the environment. It seems hard to explain these 'green virtues' in terms of the usual evolutionary-psychology selection pressures: reciprocity, kin selection, group selection -- so how can we explain their popularity (or unpopularity?)

2) What are the main sex differences in human morality, and why?

3) What role did costly signaling play in the evolution of human morality (i.e. 'showing off' certain moral virtues' to attract mates, friends, or allies, or to intimidate rival individuals or competing groups)?

4) Given the utility of 'adaptive self-deception' in human evolution -- one part of the mind not knowing what adaptive strategies another part is pursuing -- what could it mean to have the moral virtue of 'integrity' for an evolved being?

5) Why do all 'mental illnesses' (depression, mania, schizophrenia, borderline, psychopathy, narcissism, mental retardation, etc.) reduce altruism, compassion, and loving-kindness? Is this partly why they are recognized as mental illnesses?

BRIAN ENO

Artist; Composer; Recording Producer: U2, Cold Play, Talking Heads, Paul Simon; Recording Artist

Is morality a human invention - a way of trying to stabilise human societies and make them coherent - or is there evidence of a more fundamental sense of morality in creatures other than humans?

Another way of asking this question is: are there moral concepts that are not specifically human?

Yet another way of asking this is: are moral concepts specifically the province of human brains? And, if they are, is there any basis for suggesting that there are any 'absolute' moral precepts?

Or: do any other creatures exhibit signs of 'honour' or 'shame'??

JAMES FOWLER

Political Scientist, University of California, San Diego; Coauthor, Connected

Given recent evidence about the power of social networks, what is our personal responsibility to our friends' friends?

REBECCA MACKINNON

Blogger & Cofounder, Global Voices Online; Former CNN journalist and head of CNN bureaus in Beijing & Tokyo; Visiting Fellow, Princeton University's Center for Information Technology Policy

Does the human race require a major moral evolution in order to survive? Isn't part of the problem that our intelligence has vastly out-evolved our morality, which is still stuck back in the paleolithic age? Is there anything we can do? Or is this the tragic flaw that dooms us? Might technology help to facilitate or speed up our moral evolution, as some say technology is already doing for human intelligence? We have artificial intelligence and augmented reality. What about artificial or augmented morality?

JARON LANIER

Musician, Computer Scientist; Pioneer of Virtural Reality; Author, You Are Not A Gadget: A Manifesto

A crucial topic is how group interactions change moral perception. To what degree are there clan-oriented processes inherent in the human brain? In particular, how can well-informed software designs for network-mediated social experience play a role in changing behavior and values? Is there anything specific that can be done to reduce mob-like phenomena, as is spawned in online forums like 4chan's /b/, without resorting to degrees of imposed control? This is where a science of moral psychology could inform engineering.

EVA WISTEN

Journalist; Author, Single in Manhattan

What's would be a good definition - a few examples - of common moral sense? How does an averagely moral human think and behave (it's easy to paint a picture of the actions of an immoral person...) Now, how can this be expanded?

Could an understanding/acceptance of the idea that we are all having unconscious instincts for what's right and wrong replace the idea of religion as necessary for moral behavior?

What tends to be the hierarchy of "blinders" - the arguments we, consciously or unconsciously, use to relabel exploitative acts as good? (I did it for God, I did it for the German People, I did it for Jodie Foster...) What evolutionary purpose have they filled?

BRIAN KNUTSON

Psychologist & Neuroscientist, Stanford

What is the difference between morality and emotion? How can scientists distinguish between the two (or should they)? Why has Western culture been so historically reluctant to recognize emotion as a major influence on moral judgments?

ANDRIAN KREYE?

Feuilleton Editor, Sueddutsche Zeitung

Is there a fine line or a wide gap between morality and ideology?

ANONYMOUS

1. Some of the new literature on moral psychology feels like traditional discussions of ethics with a few numbers attached from surveys; almost like old ideas in a new can. As an outsider I'd be curious to know what's really new here. Specifically, if William James were resurrected what might be the new findings we could explain to him that would astound him or fundamentally change his way of thinking?

2. Is there a reason to believe there is such a thing as moral psychology that transcends upbringing and culture? Are we really studying a fundamental feature of the mind or simply the outcome of a social process?

ALISON GOPNIK

Psychologist, UC, Berkeley; Author, The Philosophical Baby

Many people have proposed an evolutionary psychology/ nativist view of moral capacities. But surely one of the most dramatic and obvious features of our moral capacities is their capacity for change and even radical transformation with new experiences. At the same time this transformation isn't just random but seems to have a progressive quality. Its analogous to science which presents similar challenges to a nativist view. And even young children are empirically, capable of this kind of change in both domains. How do we get to new and better conceptions of the world, cognitive or moral, if the nativists are right?

ROBERT TRIVERS

Evolutionary Biologist, Rutgers University; Coauthor, Genes In Conflict: The Biology of Selfish Genetic Elements

Shame.

What is it? When does it occur? What function does it serve? How is it related, if at all, to guilt? Is it related to "morality" and if so how?

Key point, John, is that shame is a complex mixture of self and other: Tiger Woods SHAMES his wife in public — he may likewise be ashamed.

If i fuck a goat i may feel ashamed if someone saw it, but absent harm to the goat, not clear how i should respond if i alone witness it.

"Life consists of propositions about life."

— Wallace Stevens ("Men Made Out Of Words")

"I just read the Life transcript book and it is fantastic. One of the better books I've read in a while. Super rich, high signal to noise, great subject."

— Kevin Kelly, Editor-At-Large, Wired

"The more I think about it the more I'm convinced that Life: What A Concept! was one of those memorable events that people in years to come will see as a crucial moment in history. After all, it's where the dawning of the age of biology was officially announced."

— Andrian Kreye, Süddeutsche Zeitung

EDGE PUBLISHES "LIFE: WHAT A CONCEPT!" TRANSCRIPT AS DOWNLOADABLE PDF BOOK [1.14.08]

Edge is pleased to announce the online publication of the complete transcript of this summer's Edge event, Life: What a Concept! as a 43,000- word downloadable PDF Edgebook.

The event took place at Eastover Farm in Bethlehem, CT on Monday, August 27th (see below). Invited to address the topic "Life: What a Concept!" were Freeman Dyson, J. Craig Venter, George Church, Robert Shapiro, Dimitar Sasselov, and Seth Lloyd, who focused on their new, and in more than a few cases, startling research, and/or ideas in the biological sciences.

Reporting on the August event, Andrian Kreye, Feuilleton (Arts & Ideas) Editor of Süddeutsche Zeitung wrote:

Soon genetic engineering will shape our daily life to the same extent that computers do today. This sounds like science fiction, but it is already reality in science. Thus genetic engineer George Church talks about the biological building blocks that he is able to synthetically manufacture. It is only a matter of time until we will be able to manufacture organisms that can self-reproduce, he claims. Most notably J. Craig Venter succeeded in introducing a copy of a DNA-based chromosome into a cell, which from then on was controlled by that strand of DNA.

Jordan Mejias, Arts Correspondent of Frankfurter Allgemeine Zeitung, noted that:

These are thoughts to make jaws drop...Nobody at Eastover Farm seemed afraid of a eugenic revival. What in German circles would have released violent controversies, here drifts by unopposed under mighty maple trees that gently whisper in the breeze.

The following Edge feature on the "Life: What a Concept!" August event includes a photo album; streaming video; and html files of each of the individual talks.

In April, Dennis Overbye, writing in the New York Times "Science Times," broke the story of the discovery by Dimitar Sasselov and his colleagues of five earth-like exo-planets, one of which "might be the first habitable planet outside the solar system."

At the end of June, Craig Venter has announced the results of his lab's work on genome transplantation methods that allows for the transformation of one type of bacteria into another, dictated by the transplanted chromosome. In other words, one species becomes another. In talking to Edge about the research, Venter noted the following:

Now we know we can boot up a chromosome system. It doesn't matter if the DNA is chemically made in a cell or made in a test tube. Until this development, if you made a synthetic chomosome you had the question of what do you do with it. Replacing the chomosome with existing cells, if it works, seems the most effective to way to replace one already in an existing cell systems. We didn't know if it would work or not. Now we do. This is a major advance in the field of synthetic genomics. We now know we can create a synthetic organism. It's not a question of 'if', or 'how', but 'when', and in this regard, think weeks and months, not years.

In July, in an interesting and provocative essay in New York Review of Books entitled "Our Biotech Future," Freeman Dyson wrote:

The Darwinian interlude has lasted for two or three billion years. It probably slowed down the pace of evolution considerably. The basic biochemical machinery o life had evolved rapidly during the few hundreds of millions of years of the pre-Darwinian era, and changed very little in the next two billion years of microbial evolution. Darwinian evolution is slow because individual species, once established evolve very little. With rare exceptions, Darwinian evolution requires established species to become extinct so that new species can replace them.

Now, after three billion years, the Darwinian interlude is over. It was an interlude between two periods of horizontal gene transfer. The epoch of Darwinian evolution based on competition between species ended about ten thousand years ago, when a single species, Homo sapiens, began to dominate and reorganize the biosphere. Since that time, cultural evolution has replaced biological evolution as the main driving force of change. Cultural evolution is not Darwinian. Cultures spread by horizontal transfer of ideas more than by genetic inheritance. Cultural evolution is running a thousand times faster than Darwinian evolution, taking us into a new era of cultural interdependence which we call globalization. And now, as Homo sapiens domesticates the new biotechnology, we are reviving the ancient pre-Darwinian practice of horizontal gene transfer, moving genes easily from microbes to plants and animals, blurring the boundaries between species. We are moving rapidly into the post-Darwinian era, when species other than our own will no longer exist, and the rules of Open Source sharing will be extended from the exchange of software to the exchange of genes. Then the evolution of life will once again be communal, as it was in the good old days before separate species and intellectual property were invented.

It's clear from these developments as well as others, that we are at the end of one empirical road and ready for adventures that will lead us into new realms.

This year's Annual Edge Event took place at Eastover Farm in Bethlehem, CT on Monday, August 27th. Invited to address the topic "Life: What a Concept!" were Freeman Dyson, J. Craig Venter, George Church, Robert Shapiro, Dimitar Sasselov, and Seth Lloyd, who focused on their new, and in more than a few cases, startling research, and/or ideas in the biological sciences.

Physicist Freeman Dyson envisions a biotech future which supplants physics and notes that after three billion years, the Darwinian interlude is over. He refers to an interlude between two periods of horizontal gene transfer, a subject explored in his abovementioned essay.

Craig Venter, who decoded the human genome, surprised the world in late June by announcing the results of his lab's work on genome transplantation methods that allows for the transformation of one type of bacteria into another, dictated by the transplanted chromosome. In other words, one species becomes another.

George Church, the pioneer of the Synthetic Biology revolution, thinks of the cell as operating system, and engineers taking the place of traditional biologists in retooling stripped down components of cells (bio-bricks) in much the vein as in the late 70s when electrical engineers were working their way to the first personal computer by assembling circuit boards, hard drives, monitors, etc.

Biologist Robert Shapiro disagrees with scientists who believe that an extreme stroke of luck was needed to get life started in a non-living environment. He favors the idea that life arose through the normal operation of the laws of physics and chemistry. If he is right, then life may be widespread in the cosmos.

Dimitar Sasselov, Planetary Astrophysicist, and Director of the Harvard Origins of Life Initiative, has made recent discoveries of exo-planets ("Super-Earths"). He looks at new evidence to explore the question of how chemical systems become living systems.

Quantum engineer Seth Lloyd sees the universe as an information processing system in which simple systems such as atoms and molecules must necessarily give rise complex structures such as life, and life itself must give rise to even greater complexity, such as human beings, societies, and whatever comes next.

A small group of journalists interested in the kind of issues that are explored on Edge were present: Corey Powell, Discover, Jordan Mejias, Frankfurter Allgemeine Zeitung, Heidi Ledford, Nature, Greg Huang, New Scientist, Deborah Treisman, New Yorker, Edward Rothstein, The New York Times, Andrian Kreye, Süddeutsche Zeitung, Antonio Regalado, Wall Street Journal. Guests included Heather Kowalski, The J. Craig Venter Institute, Ting Wu, The Wu Lab, Harvard Medical School, and the artist Stephanie Rudloe. Attending for Edge: Katinka Matson, Russell Weinberger, Max Brockman, and Karla Taylor.

We are witnessing a point in which the empirical has intersected with the epistemological: everything becomes new, everything is up for grabs. Big questions are being asked, questions that affect the lives of everyone on the planet. And don't even try to talk about religion: the gods are gone.

Following the theme of new technologies=new perceptions, I asked the speakers to take a third culture slant in the proceedings and explore not only the science but the potential for changes in the intellectual landscape as well.

We are pleased to present the transcripts of the talks and conversation along with streaming video clips (links below).

— JB

FREEMAN DYSON

The essential idea is that you separate metabolism from replication. We know modern life has both metabolism and replication, but they're carried out by separate groups of molecules. Metabolism is carried out by proteins and all kinds of other molecules, and replication is carried out by DNA and RNA. That maybe is a clue to the fact that they started out separate rather than together. So my version of the origin of life is that it started with metabolism only.

FREEMAN DYSON: First of all I wanted to talk a bit about origin of life. To me the most interesting question in biology has always been how it all got started. That has been a hobby of mine. We're all equally ignorant, as far as I can see. That's why somebody like me can pretend to be an expert.

I was struck by the picture of early life that appeared in Carl Woese's article three years ago. He had this picture of the pre-Darwinian epoch when genetic information was open source and everything was shared between different organisms. That picture fits very nicely with my speculative version of origin of life.

The essential idea is that you separate metabolism from replication. We know modern life has both metabolism and replication, but they're carried out by separate groups of molecules. Metabolism is carried out by proteins and all kinds of small molecules, and replication is carried out by DNA and RNA. That maybe is a clue to the fact that they started out separate rather than together. So my version of the origin of life is it started with metabolism only. ...

___

FREEMAN DYSON is professor of physics at the Institute for Advanced Study, in Princeton. His professional interests are in mathematics and astronomy. Among his many books are Disturbing the Universe, Infinite in All Directions Origins of Life, From Eros to Gaia, Imagined Worlds, The Sun, the Genome, and the Internet, and most recently A Many Colored Glass: Reflections on the Place of Life in the Universe.

CRAIG VENTER

I have come to think of life in much more a gene-centric view than even a genome-centric view, although it kind of oscillates. And when we talk about the transplant work, genome-centric becomes more important than gene-centric. From the first third of the Sorcerer II expedition we discovered roughly 6 million new genes that has doubled the number in the public databases when we put them in a few months ago, and in 2008 we are likely to double that entire number again. We're just at the tip of the iceberg of what the divergence is on this planet. We are in a linear phase of gene discovery maybe in a linear phase of unique biological entities if you call those species, discovery, and I think eventually we can have databases that represent the gene repertoire of our planet.

One question is, can we extrapolate back from this data set to describe the most recent common ancestor. I don't necessarily buy that there is a single ancestor. It’s counterintuitive to me. I think we may have thousands of recent common ancestors and they are not necessarily so common.

J. CRAIG VENTER: Seth's statement about digitization is basically what I've spent the last fifteen years of my career doing, digitizing biology. That's what DNA sequencing has been about. I view biology as an analog world that DNA sequencing has taking into the digital world . I'll talk about some of the observations that we have made for a few minutes, and then I will talk about once we can read the genetic code, we've now started the phase where we can write it. And how that is going to be the end of Darwinism.

On the reading side, some of you have heard of our Sorcerer II expedition for the last few years where we've been just shotgun sequencing the ocean. We've just applied the same tools we developed for sequencing the human genome to the environment, and we could apply it to any environment; we could dig up some soil here, or take water from the pond, and discover biology at a scale that people really have not even imagined.

The world of microbiology as we've come to know it is based on over a hundred year old technology of seeing what will grow in culture. Only about a tenth of a percent of microbiological organisms, will grow in the lab using traditional techniques. We decided to go straight to the DNA world to shotgun sequence what's there; using very simple techniques of filtering seawater into different size fractions, and sequencing everything at once that's in the fractions. ...

___

J. CRAIG VENTER is one of leading scientists of the 21st century for his visionary contributions in genomic research. He is founder and president of the J. Craig Venter Institute. The Venter Institute conducts basic research that advances the science of genomics; specializes inhuman genome based medicine, infectious disease, environmental genomics and synthetic genomics and synthetic life, and explores the ethical and policy implications of genomic discoveries and advances. The Venter Institute employes more than 400 scientist and staff in Rockville, Md and in La Jolla, Ca. He is the author of A Life Decoded: My Genome: My Life.

GEORGE CHURCH

Many of the people here worry about what life is, but maybe in a slightly more general way, not just ribosomes, but inorganic life. Would we know it if we saw it? It's important as we go and discover other worlds, as we start creating more complicated robots, and so forth, to know, where do we draw the line?

GEORGE CHURCH: We've heard a little bit about the ancient past of biology, and possible futures, and I'd like to frame what I'm talking about in terms of four subjects that elaborate on that. In terms of past and future, what have we learned from the past, how does that help us design the future, what would we like it to do in the future, how do we know what we should be doing? This sounds like a moral or ethical issue, but it's actually a very practical one too.

One of the things we've learned from the past is that diversity and dispersion are good. How do we inject that into a technological context? That brings the second topic, which is, if we're going to do something, if we have some idea what direction we want to go in, what sort of useful constructions we would like to make, say with biology, what would those useful constructs be? By useful we might mean that the benefits outweigh the costs — and the risks. Not simply costs, you have to have risks, and humans as a species have trouble estimating the long tails of some of the risks, which have big consequences and unintended consequences. So that's utility. 1) What we learn from the future and the past 2) the utility 3) kind of a generalization of life.

Many of the people here worry about what life is, but maybe in a slightly more general way, not just ribosomes, but inorganic life. Would we know it if we saw it? It's important as we go and discover other worlds, as we start creating more complicated robots, and so forth, to know, where do we draw the line? I think that's interesting. And then finally — that's kind of generalizational life, at a basic level — but 4) the kind of life that we are particularly enamored of — partly because of egocentricity, but also for very philosophical reasons — is intelligent life. But how do we talk about that? ...

___

GEORGE CHURCH is Professor of Genetics at Harvard Medical School and Director of the Center for Computational Genetics. He invented the broadly applied concepts of molecular multiplexing and tags, homologous recombination methods, and array DNA synthesizers. Technology transfer of automated sequencing & software to Genome Therapeutics Corp. resulted in the first commercial genome sequence (the human pathogen, H. pylori,1994). He has served in advisory roles for 12 journals, 5 granting agencies and 22 biotech companies. Current research focuses on integrating biosystems-modeling with personal genomics & synthetic biology.

ROBERT SHAPIRO

I looked at the papers published on the origin of life and decided that it was absurd that the thought of nature of its own volition putting together a DNA or an RNA molecule was unbelievable.

I'm always running out of metaphors to try and explain what the difficulty is. But suppose you took Scrabble sets, or any word game sets, blocks with letters, containing every language on Earth, and you heap them together and you then took a scoop and you scooped into that heap, and you flung it out on the lawn there, and the letters fell into a line which contained the words “To be or not to be, that is the question,” that is roughly the odds of an RNA molecule, given no feedback — and there would be no feedback, because it wouldn't be functional until it attained a certain length and could copy itself — appearing on the Earth.

ROBERT SHAPIRO: I was originally an organic chemist — perhaps the only one of the six of us — and worked in the field of organic synthesis, and then I got my PhD, which was in 1959, believe it or not. I had realized that there was a lot of action in Cambridge, England, which was basically organic chemistry, and I went to work with a gentleman named Alexander Todd, promoted eventually to Lord Todd, and I published one paper with him, which was the closest I ever got to the Lord. I then spent decades running a laboratory in DNA chemistry, and so many people were working on DNA synthesis — which has been put to good use as you can see — that I decided to do the opposite, and studied the chemistry of how DNA could be kicked to Hell by environmental agents. Among the most lethal environmental agents I discovered for DNA — pardon me, I'm about to imbibe it — was water. Because water does nasty things to DNA. For example, there's a process I heard you mention called DNA animation, where it kicks off part of the coding part of DNA from the units — that was discovered in my laboratory.

Another thing water does is help the information units fall off of DNA, which is called depurination and ought to apply only one of the subunits — but works under physiological conditions for the pyrimidines as well, and I helped elaborate the mechanism by which water helped destroy that part of DNA structure. I realized what a fragile and vulnerable molecule it was, even if was the center of Earth life. After water, or competing with water, the other thing that really does damage to DNA, that is very much the center of hot research now — again I can't tell you to stop using it — is oxygen. If you don't drink the water and don't breathe the air, as Tom Lehrer used to say, and you should be perfectly safe. ...

___

ROBERT SHAPIRO is professor emeritus of chemistry and senior research scientist at New York University. He has written four books for the general public: Life Beyond Earth (with Gerald Feinberg); Origins, a Skeptic's Guide to the Creation of Life on Earth; The Human Blueprint (on the effort to read the human genome); and Planetary Dreams (on the search for life in our Solar System).

DIMITAR SASSELOV

Is Earth the ideal planet for life? What is the future of life in our universe? We often imagine our place in the universe in the same way we experience our lives and the places we inhabit. We imagine a practically static eternal universe where we, and life in general, are born, grow up, and mature; we are merely one of numerous generations.

This is so untrue! We now know that the universe is 14 and Earth life is 4 billion years old: life and the universe are almost peers. If the universe were a 55-year old, life would be a 16-year old teenager. The universe is nowhere close to being static and unchanging either.

Together with this realization of our changing universe, we are now facing a second, seemingly unrelated realization: there is a new kind of planet out there which have been named super-Earths, that can provide to life all that our little Earth does. And more.

DIMITAR SASSELOV: I will start the same way, by introducing my background. I am a physicist, just like Freeman and Seth, in background, but my expertise is astrophysics, and more particularly planetary astrophysics. So that means I'm here to try to tell you a little bit of what's new in the big picture, and also to warn you that my background basically means that I'm looking for general relationships — for generalities rather than specific answers to the questions that we are discussing here today.

So, for example, I am personally more interested in the question of the origins of life, rather than the origin of life. What I mean by that is I'm trying to understand what we could learn about pathways to life, or pathways to the complex chemistry that we recognize as life. As opposed to narrowly answering the question of what is the origin of life on this planet. And that's not to say there is more value in one or the other; it's just the approach that somebody with my background would naturally try to take. And also the approach, which — I would agree to some extent with what was said already — is in need of more research and has some promise.

One of the reasons why I think there are a lot of interesting new things coming from that perspective, that is from the cosmic perspective, or planetary perspective, is because we have a lot more evidence for what is out there in the universe than we did even a few years ago. So to some extent, what I want to tell you here is some of this new evidence and why is it so exciting, in being able to actually inform what we are discussing here. ...

___

DIMITAR SASSELOV is Professor of Astronomy at Harvard University and Director, Harvard Origins of Life Initiative. Most recently his research has led him to explore the nature of planets orbiting other stars. Using novel techniques, he has discovered a few such planets, and his hope is to use these techniques to find planets like Earth. He is the founder and director of the new Harvard Origins of Life Initiative, a multidisciplinary center bridging scientists in the physical and in the life sciences, intent to study the transition from chemistry to life and its place in the context of the Universe.

Dimitar Sasselov's Edge Bio Page

SETH LLOYD

If you program a computer at random, it will start producing other computers, other ways of computing, other more complicated, composite ways of computing. And here is where life shows up. Because the universe is already computing from the very beginning when it starts, starting from the Big Bang, as soon as elementary particles show up. Then it starts exploring — I'm sorry to have to use anthropomorphic language about this, I'm not imputing any kind of actual intent to the universe as a whole, but I have to use it for this to describe it — it starts to explore other ways of computing.

SETH LLOYD: I'd like to step back from talking about life itself. Instead I'd like to talk about what information processing in the universe can tell us about things like life. There's something rather mysterious about the universe. Not just rather mysterious, extremely mysterious. At bottom, the laws of physics are very simple. You can write them down on the back of a T-shirt: I see them written on the backs of T-shirts at MIT all the time, even in size petite. IN addition to that, the initial state of the universe, from what we can tell from observation, was also extremely simple. It can be described by a very few bits of information.

So we have simple laws and simple initial conditions. Yet if you look around you right now you see a huge amount of complexity. I see a bunch of human beings, each of whom is at least as complex as I am. I see trees and plants, I see cars, and as a mechanical engineer, I have to pay attention to cars. The world is extremely complex.